News

Devico Named in G2's Top 10 Services by G2 Score — Summer 2026

Jun 2nd 26 - by Devico Team

Devico earned a 92.52 G2 Score in the Summer 2026 Reports, ranking among the Top 10 Services globally across 27,340+ reports.

Hire

Hire by role

Hire Front-end developers

Hire Back-end developers

Hire Full-stack developers

Hire Android developers

Hire iOS developers

Hire Mobile developers

Hire AI engineers

Hire by skill

Hire JavaScript developers

Hire React Native developers

Hire React.js developers

Hire .NET developers

Hire TypeScript developers

Hire Flutter developers

Hire Golang developers

Hire by country

Devs in Ukraine

Expirienced engineers with strong product focus and fast integration.

Devs in Poland

EU-based developers with reliable delivery and high standards.

Devs in Argentina

Senior engineers with strong technical depth and timezone alignment.

Digital transformation

October 27, 2023 - by Devico Team

Summarize with:

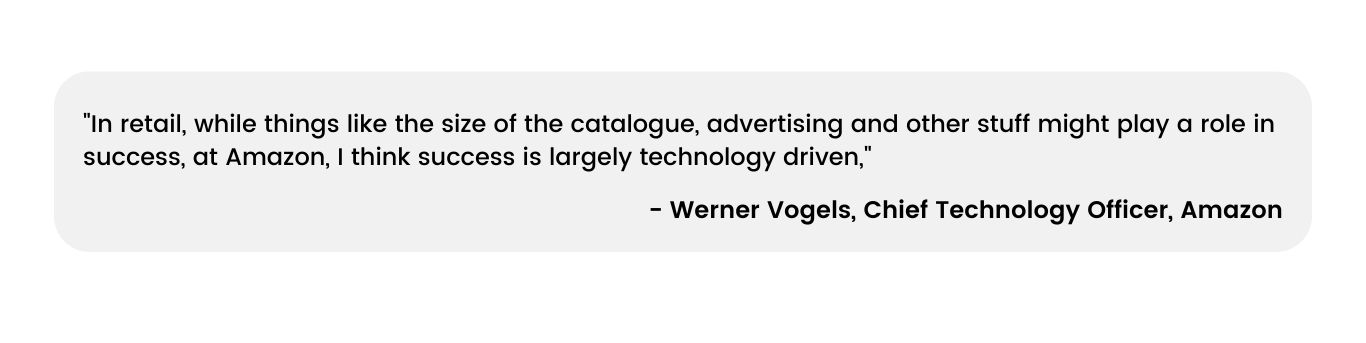

Businesses are always looking for ways to improve their operations and stay ahead of the competition. That's why data plays a crucial role in transforming the future. Big Data, which is characterized by its sheer volume, velocity, and variety, is at the forefront of digital transformation initiatives.

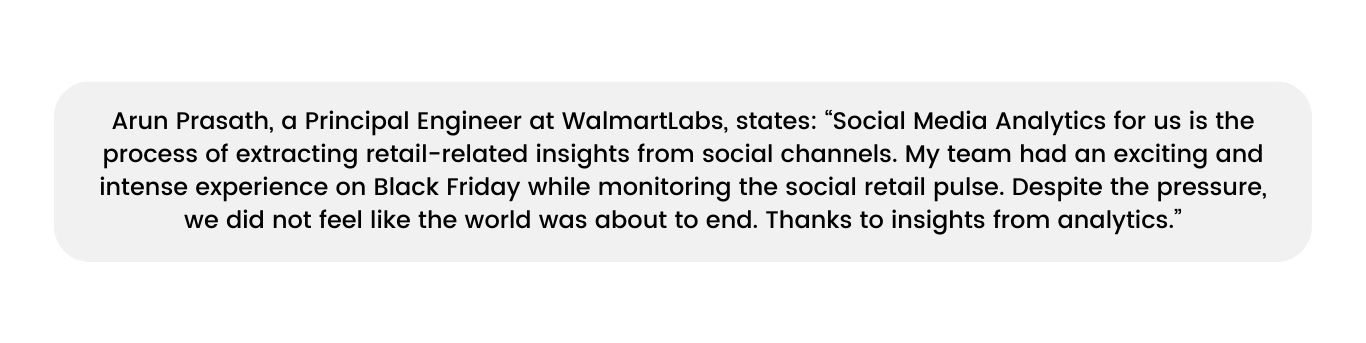

Walmart is a great example of a company that uses big data to optimize its supply chain management. By analyzing customer data, weather patterns, and other factors, Walmart has been able to manage its inventory better, reduce waste, and improve its profits. The company has reported a 10% increase in sales due to its use of big data analytics.

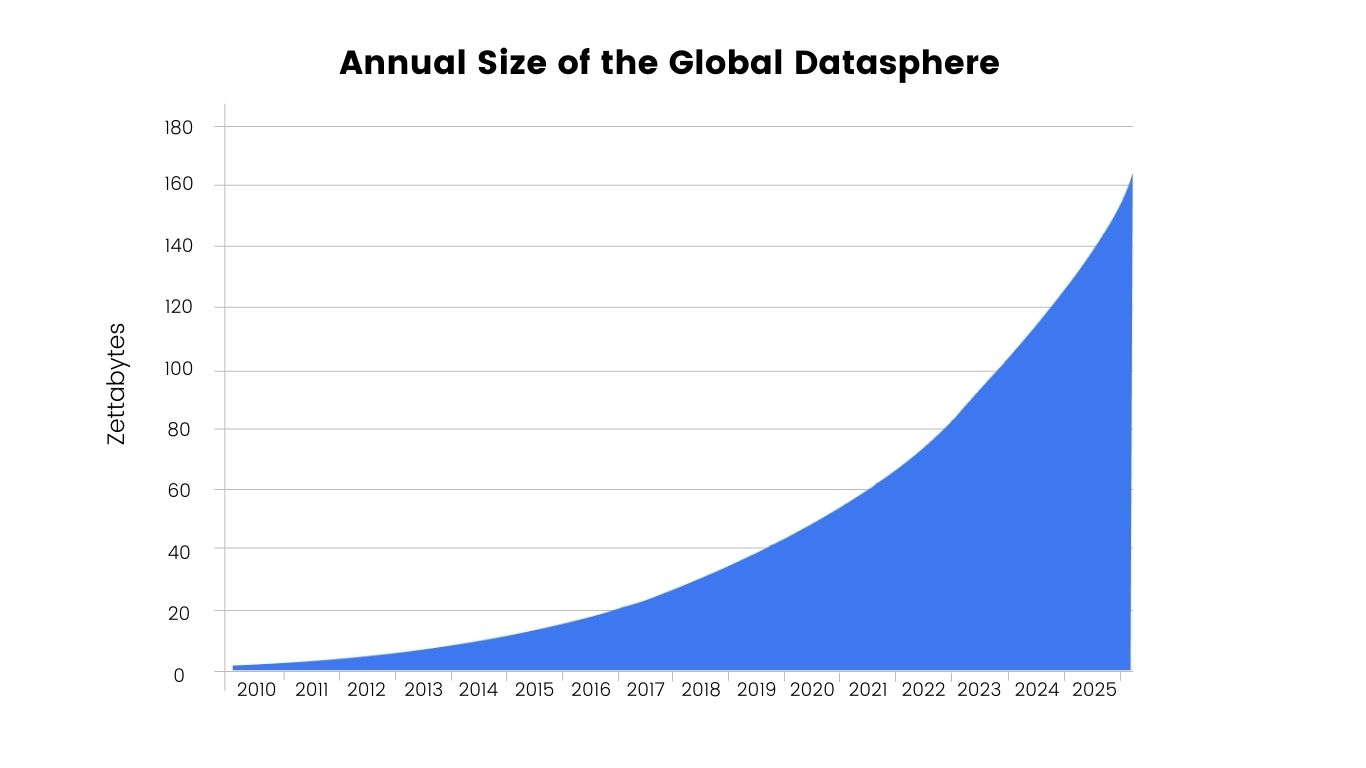

This is just one example of how big data is transforming businesses across industries. Have a glance at Gartner’s research. The analytics giant states that by the end of 2023, over 50% of large enterprises will have institutionalized a culture that will “admire” data, compared with less than 5% in 2020. In this case, we have a very interesting point — IoT makes people's lives easier but also makes devs’ lives harder. Because IoT expansion has led to an exponential rise in data volumes. It is estimated that the digital universe will grow to 175 zettabytes in just 2 years, with data growing at a rate of around 40-50% annually. However, merely collecting data is not enough. It is how companies analyze and extract meaningful insights from this data that determines their success in the digital era.

By leveraging digital transformation data analytics, businesses can automate processes, improve decision-making, and create new business models. This is why this component is critical for DT.

Digital transformation data can provide numerous benefits to businesses. This technology can transform the way organizations function and compete in the digital landscape by offering a wealth of advantages that span various aspects of operations and decision-making. The advantages are both substantial and multifaceted, making it an essential tool for enhancing business performance.

Improved Decision-Making: Big Data empowers organizations to make informed, data-driven decisions with unparalleled precision. By aggregating and analyzing vast amounts of structured and unstructured data, businesses gain deeper insights into market trends, customer behavior, and operational performance. For instance, Netflix leverages Big Data to recommend personalized content to its subscribers, resulting in increased user engagement and satisfaction.

Increased Efficiency: The integration of Big Data analytics streamlines operations, driving unparalleled efficiency gains. For example, General Electric (GE) uses Big Data to optimize its supply chain, reducing maintenance costs by 25% and downtime by up to 5%. This level of operational efficiency has a direct impact on the bottom line. Back to Netflix, it also analyzes viewing data to identify popular genres and commission original content accordingly. This has helped the company originals consistently feature among the most watched shows.

Enhanced Product Development: Big Data fuels innovation by providing insights into customer preferences and market gaps. Amazon's success in launching Echo, powered by its AI assistant Alexa, is a testament to the power of Big Data-driven product development. The device's success hinged on its ability to gather and process data on user interactions to continually improve its services and features. In case you are in the dark, Alexa gets a more human-like voice thanks to generative AI and customers’ feedback (analysis, analysis everywhere!).

Increased Customer Satisfaction: Big Data facilitates a deeper understanding of customer needs and preferences, enabling businesses to provide more personalized experiences. Starbucks, for example, leverages Big Data analytics to customize marketing campaigns and offerings for individual stores, significantly enhancing customer satisfaction and loyalty. Even a conceptual airline can analyze flier preferences and behaviors to offer personalized upgrades, loyalty programs, and services.

Improved Risk Management: Businesses face an array of risks, from financial uncertainties to cybersecurity threats. Big Data analytics provides the tools to identify, assess, and mitigate these risks effectively. Financial institutions like JPMorgan Chase employ Big Data to identify and combat fraudulent transactions, safeguarding their customers and assets.

Building a robust Big Data transformation strategy is essential for organizations aiming to harness the full potential of data analytics in their digital transformation journey. It entails a systematic approach that aligns data initiatives with overarching business objectives, ensuring that the use of data is purposeful and impactful. We realize that such wording abounds on the internet and is often skipped. However, below we will give examples that actually contain the whole point.

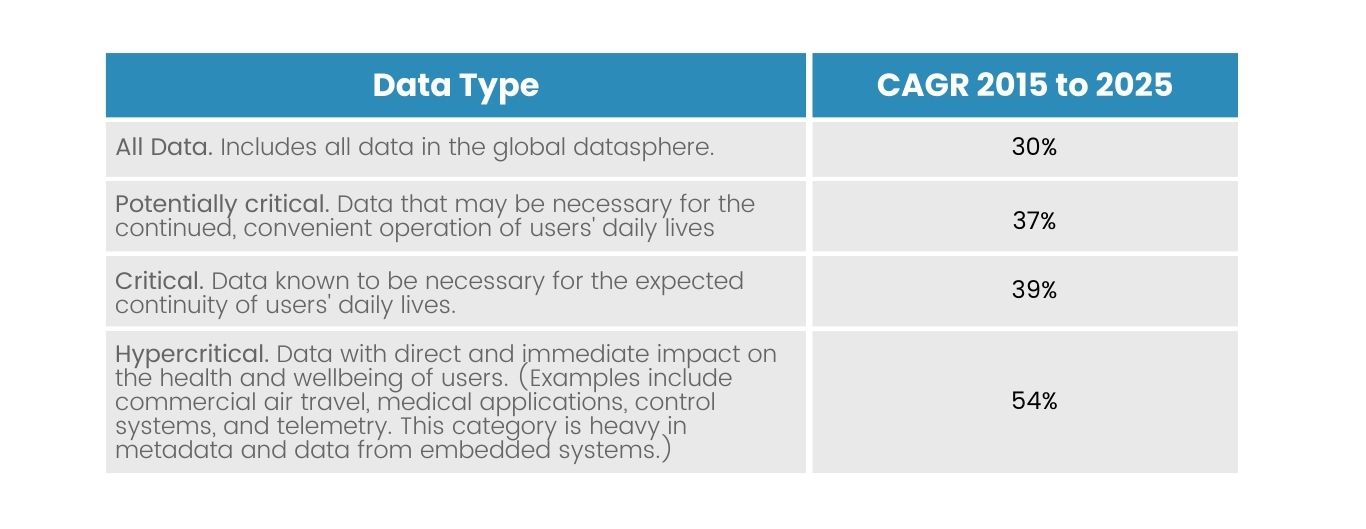

Assessing Data Needs: Once the business goals are defined, the next step is to assess the data needs. This involves evaluating what types of data are required to support the objectives. Recall the table with the types of data from the beginning of the article. Would be helpful now. The healthcare industry also could provide an illustrative example. Hospitals and medical institutions are increasingly relying on Big Data analytics to improve patient care and reduce costs. They collect patient data from various sources, including electronic health records, wearables, and genetic information, to gain comprehensive insights into individual health profiles. We know that because for the last 10 years, Devico has been developing custom healthcare solutions.

Developing Data Governance Policies: Effective data governance policies are the bedrock of a successful Big Data strategy. These policies address issues related to data privacy, security, quality, and compliance. Take the example of financial institutions like Capital One. They have stringent data governance policies in place to safeguard customer information and ensure regulatory compliance. These policies provide the necessary framework for responsible data management and usage.

Choosing the Right Technology: Selecting the appropriate Big Data technologies is a critical decision. This involves selecting suitable big data technologies, such as Hadoop, Spark, or NoSQL databases, to store and analyze large amounts of data. For example, a marketing agency may use Hadoop to analyze customer data and identify trends and patterns that can be used to create targeted marketing campaigns. Netflix, for another instance, also relies on a suite of Big Data technologies, including Apache Cassandra and Apache Kafka, to process vast amounts of streaming data in real-time, enabling a seamless user experience.

Regarding analytics, organizations obviously rely on a range of essential technologies and tools to collect, process, and gain valuable insights from vast datasets. We are sure you know them all, but here are some nuances with top big data technologies and tools that businesses should consider:

Hadoop’s pros: Hadoop is a tool that allows businesses to store and process large amounts of data. It can handle different types of data, making it versatile. Hadoop's MapReduce algorithm helps businesses process data quickly and efficiently, while its Hadoop Distributed File System (HDFS) provides a reliable storage solution. The tool's main strength is its ability to scale horizontally, meaning it can handle petabytes of data. For instance, Facebook employs Hadoop to analyze user behavior and optimize its content recommendations, resulting in a more engaging user experience.

Hadoop’s cons: Good tool but there is always a “but”. It can be tough to set up and maintain, and firms may require workers with specialized skills to use the tool effectively. Further, Hadoop is designed for batch processing of large data sets and may not be able to keep up with the speed and responsiveness required by real-time applications. The last pitfall is that this tool is optimized for working with large data sets and may not be efficient or cost-effective for smaller data sets.

Spark pros: Apache Spark is another Big Data digital transformation tool. It’s a powerful tool known for its ability to process data in-memory, which is much faster than traditional MapReduce processing. It is a versatile platform that supports multiple programming languages, making it accessible to both data engineers and scientists. For instance, Airbnb uses Spark to process and analyze vast amounts of data, enabling personalized recommendations for its users.

Spark cons: But there is a thing — Spark can also be difficult to configure and maintain, and companies also may need narrow-amed skilled specialists. Additionally, Spark's in-memory processing capabilities can be resource-intensive, which may limit its usefulness for some applications.

NoSQL Databases pros: These are a type of database that is specifically designed to handle data that is unstructured or semi-structured. They offer a flexible and scalable approach for managing diverse data types, which makes them particularly valuable for applications like real-time analytics and content management systems. MongoDB is a popular NoSQL database management system that is used by businesses such as eBay and The New York Times. MongoDB is known for its ability to handle large amounts of data, its powerful indexing capabilities, and its support for dynamic queries and updates. Its flexible data model also allows for rapid development and iteration, making it a popular choice for developers working on complex applications.

NoSQL Databases cons: However, NoSQL databases can be complex to set up and maintain (wow, unexpectedly, right?!), and businesses may require specialized skills to use them effectively. NoSQL databases may not be the best choice for applications that require complex queries or transactions, which can limit their usefulness for some applications.

Data Visualization Tools: Data visualization tools play a pivotal role in extracting actionable insights from complex datasets. They enable organizations to transform raw data into comprehensible visualizations, making it easier for decision-makers to understand trends and patterns. Tableau, a popular data visualization tool, is used by organizations like Pfizer to visualize clinical trial data, facilitating faster drug development and regulatory approval.

Big data security and privacy are major concerns for businesses that collect, store, and analyze large amounts of information. To address these concerns, businesses must implement a range of security measures to safeguard sensitive data. Addressing these concerns involves a multifaceted approach.

Access Controls: There's talk that if the hackers want to, they can access the data at any cost. Don't believe it. That's where access control comes in. This entails defining who can access specific data sets and what actions they can perform. Access control mechanisms is all about protecting data from being taken over by third-party “atackers”. Such measures could be user authentication, role-based access control, and data access monitoring. These tools are critical, for example, for a healthcare provider, which must ensure maximal protection of sensitive data — that only authorized personnel have access to it.

Data Encryption: It plays a vital role in securing data during transmission and storage. By encrypting data, it becomes indecipherable to unauthorized users without the encryption keys. Of course, there have been many cases of information being stolen before. Need an example? You’re welcome.

Recall 2017. Exactly the year when the story has happened. Equifax, a credit reporting company, received a notice from the US Department of Homeland Security's Computer Emergency Readiness Team (CERT) regarding an Apache Struts vulnerability. Despite sending an internal email about the flaw, Equifax failed to take the necessary steps to address the vulnerability. An automatic scan also failed to identify the problematic version of Apache Struts. Additionally, a misconfigured device inspecting encrypted traffic resulted in an expired digital certificate. As a result of these oversights, a digital attacker was able to gain access to Equifax's system from mid-May to the end of July, compromising the sensitive information of millions of individuals. But mainly data breaches happen due to human factors: at least the 2022 Global Risks Report released by the World Economic Forum states that. To be more precise, 95% of cybersecurity threats in 2022 were caused by human error.

In that case, encryption might play a crucial role. But… So the safeguards data against interception during transfer and ensures that even if the data is breached, it remains unreadable to malicious actors.

Data Masking: It’s a way, tool, technique – whatever you call it – utilized to conceal delicate info while preserving its format and usability. It involves replacing or obfuscating sensitive data with fictional or pseudonymous values. This allows organizations to share data for testing or analytics while protecting individuals' privacy and sensitive details. For example, a retail business may use data masking to protect customer data during website development.

Data Retention Policies: To manage data effectively, it's important for organizations to have clear policies for retaining data. These policies determine how long data should be kept when it should be deleted, and in what circumstances. By following these policies, companies can reduce the risks associated with keeping unnecessary data and ensure that they comply with data protection laws. Generally, these policies include archiving data, deleting data, and backing up data. For example, a legal firm may establish DRPs to ensure that client data is only retained for as long as necessary and is disposed of securely.

This phenomenon is marked by exciting developments that promise to reshape industries and drive innovation. As data continues to grow at an exponential rate, the following trends are expected to dominate the landscape:

Artificial Intelligence (AI) Integration: AI and machine learning will play an increasingly vital role in harnessing the potential of Big Data. These technologies will enable more sophisticated data analytics, predictive modeling, and automation of decision-making processes. But apart from pathetic phrases, AI is really reshaping the way we even think. A McKinsey survey found that organizations that invest in AI and machine learning achieve a 5-10% increase in customer satisfaction, on average. Not a big deal but still not bad.

Edge Computing: With the expansion of IoT devices, data processing is moving closer to the source. Edge computing allows for real-time analysis of data at the edge of the network, reducing latency and enabling faster response times. IoT Analytics states that processed IoT data increased by 10% from 2018. At first glance, this is not impressive, but just look from another angle — each percent of data is terabytes of information. As more devices become connected to the internet, businesses are collecting more data than ever before. By analyzing this data, businesses can gain insights into customer behavior, product usage, and other factors that can be used to improve their operations.

Data Ethics and Privacy: As data collection expands, so do concerns about privacy and ethical use. Big Data in the future (tools connected with them) will likely include stricter regulations, greater emphasis on data security, maybe, blockchain developments, and a growing focus on ethical data handling.

Quantum Computing: The advent of quantum computing holds the promise of solving complex Big Data problems that are currently beyond the capabilities of classical computers. This has the potential to revolutionize industries such as cryptography and drug discovery. IBM's Quantum Hummingbird processor, introduced in 2020, marked a significant step toward quantum computing for commercial applications. And more to come — in 2024 at least, as IBM promises.

Data-driven Sustainability: Big Data will play a pivotal role in addressing global challenges like climate change. Data analytics will be used to optimize resource utilization, reduce waste, and drive sustainability initiatives. By the way, the World Economic Forum 2021 stated that data analytics and IoT technologies can reduce greenhouse gas emissions by up to 15% in sectors like agriculture and transport. Greta Thunberg hits a like button.

Big Data has already demonstrated its transformative potential across various industries, ushering in new possibilities and improving decision-making processes. Here are a few standout examples.

In the world of sports analytics, organizations like the NBA have leveraged Big Data to gain deep insights into player performance and game strategies. By tracking player movements and interactions using sensors and cameras, teams can optimize their tactics and enhance their chances of success. Turner Sports, the NBA, and SAP have been collaborating on "GM School Powered by SAP" since 2019. The partnership aims to equip next-generation front-office candidates with knowledge not only about the salary cap and luxury tax but also about sports tech, big data, and scouting. This comprehensive approach recognizes the importance of understanding the latest technologies and data analytics in sports management.

Retail giants like Amazon use Big Data to personalize shopping experiences based on behavior, product preferences, and weather patterns.

In the financial sector, Big Data is utilized for fraud detection, risk assessment, and algorithmic trading. Banks, for example, use Big Data analytics to monitor transactions for unusual patterns, identifying and preventing fraudulent activities.

In manufacturing, Industry 4.0 is like a conductor leading an orchestra. Just as the conductor uses every member of the orchestra to create a harmonious sound, manufacturers use data analytics and sensors to optimize production processes and improve product quality. Think of it as a symphony of technology, where the Industrial Internet of Things (IIoT) is the sheet music and Big Data is the notes played by each instrument. Like a musician following a conductor's directions, manufacturers rely on IIoT and Big Data to reduce downtime and ensure their production processes are in perfect harmony.

Big data digital transformation has played a pivotal role in the growth and development of the transportation industry. With the advent of data-driven algorithms, companies like Uber have been able to revolutionize the way people travel. By leveraging big data, Uber has been able to optimize driver routes, reduce wait times, and improve overall transportation efficiency.

With the help of data-driven technology, Uber is able to analyze real-time traffic data and use it to predict the best routes for their drivers. This not only saves time but also reduces fuel consumption and helps to minimize the environmental impact of transportation.

Furthermore, Uber's data-driven approach has helped to improve the overall safety of its riders. By analyzing trip data, Uber is able to identify patterns and trends that could pose a risk to riders and take corrective action.

Big Data emerges as the driving force behind digital transformation. Its capacity to enhance decision-making, optimize operations, and fuel innovation is nothing short of transformative. As we look to the future, Big Data is poised to take on an even more central role, influencing industries, shaping customer experiences, and revolutionizing the way we do business.

The trends we've explored, from AI integration to data ethics and quantum computing, are guiding us toward a data-driven tomorrow. Excuse us for such a pathos, but it is true, believe it or not. And it’s proven by our examples in sports, retail, finance, manufacturing, and transportation.

The future of Big Data remains vast, promising untold possibilities for those who harness its power wisely. To succeed in this data-centric era, businesses must not only embrace Big Data but also adapt continuously, staying at the forefront of technological advancements.

In case you already have aspirations for incorporating Big Data, the Devico team is here to guide you on this transformative journey.

News

Jun 2nd 26 - by Devico Team

Devico earned a 92.52 G2 Score in the Summer 2026 Reports, ranking among the Top 10 Services globally across 27,340+ reports.

Staff augmentation

May 26th 26 - by Devico Team

Avoid costly mishires. Learn how to technically assess a developer using coding tests, structured interviews, and role-calibrated vetting to find top talent.

Outsourcing to Latin America

May 19th 26 - by Devico Team

Learn how to effectively manage a nearshore development team in Latin America. Discover best practices for communication, technical leadership, and performance tracking to maximize your LatAm outsourcing ROI.